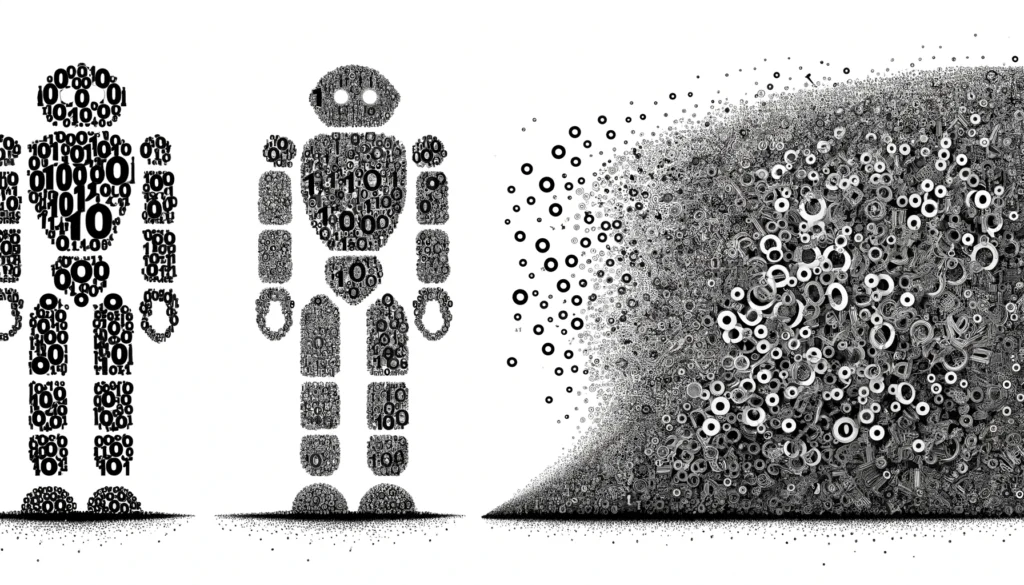

In the ever-evolving landscape of linguistic technology, the advent and integration of Large Language Models (LLMs) signify a pivotal juncture in our interaction with language, the very essence of human cognition and communication. The term “LLMs’ Semantic Debt” encapsulates a profound concern: while these models offer unparalleled capabilities in processing and generating language, they concurrently incur a form of debt—a deficit in semantic richness and linguistic diversity that accumulates silently as we increasingly rely on these artificial constructs.

The concept of semantic debt, inspired by the notion of technical debt in software development, suggests that shortcuts and compromises made for short-term efficiency or scalability can lead to long-term complexities or limitations. When applied to LLMs, this debt manifests as a gradual erosion of the nuanced, multifaceted nature of human language, replaced by a more homogenized, predictable linguistic output. This transformation raises pivotal questions about the trajectory of our linguistic evolution and the trade-offs between technological advancement and cultural-linguistic diversity.

The genesis of semantic debt in LLMs lies in their foundational mechanism: the statistical modeling of language based on vast corpora of text. While this approach enables LLMs to generate coherent, contextually appropriate language, it inherently favors patterns that are prevalent in the training data. This tendency towards the statistical mean can be seen as a form of linguistic centralization, where outlier expressions, inventive language uses, and less common dialects are underrepresented or marginalized. The richness of language, with its idiosyncrasies, regional variations, and the creative playfulness inherent in human communication, risks being overshadowed by a standardized, uniform language model output.

Moreover, the augmentation processes designed to enhance LLM performance often inadvertently exacerbate this homogenization. Techniques aimed at refining the models’ efficiency or accuracy can lead to an overemphasis on certain linguistic patterns at the expense of others, deepening the semantic debt. The implications of this are far-reaching, affecting not just the diversity of language but also the cognitive processes that are intertwined with language use. Sequential informational language of all kinds shapes thought, and a homogenized language could lead to a homogenized way of thinking, limiting our capacity for innovation, empathy, cultural understanding, and survival.

The ethical dimension of LLMs’ semantic debt is equally compelling. The opacity of these models and their training processes often obscures the nature and extent of the debt being incurred. Users interact with LLM outputs without a clear understanding of what is lost in terms of linguistic diversity and richness. Moreover, the accountability for this debt is diffusely distributed among developers, users, and the algorithms themselves, complicating efforts to address or mitigate its impacts.

Addressing LLMs’ semantic debt requires a multifaceted approach, integrating insights from linguistics, cognitive science, statistics, computer science, and drawing on analogies from Darwinian evolution, quantum mechanics, and various mathematical principles to comprehend the phenomenon’s multifaceted impacts. These scientific frameworks provide profound insights into the risks and consequences of homogenization trends driven by LLMs, affecting not only natural languages but also mathematical and computational languages crucial for expressive computing and scientific discourse and discovery.

Darwin’s principles of evolution, centered on diversity as a crucial factor for adaptability and resilience, offer a compelling parallel to the linguistic ecosystem. Just as biodiversity ensures ecological health and resilience against changes, linguistic diversity fosters cultural richness and adaptability in the face of new cognitive and communicative challenges. The homogenization engendered by LLMs mirrors a kind of monoculture in linguistics, where the reduction in variety could make our linguistic landscape less robust and more susceptible to ‘cultural extinctions’—the loss of unique ways of thinking and expressing that are tied to language.

Quantum mechanics introduces concepts like uncertainty and superposition, which can be metaphorically applied to understand the dynamics of language evolution in the age of LLMs. The Heisenberg Uncertainty Principle, which states that one cannot simultaneously know both the position and velocity of a particle with precision, resonates with the unpredictability of linguistic evolution. LLMs, by potentially narrowing the range of linguistic variability, may constrain our ability to navigate and respond to this inherent uncertainty in language evolution.

Furthermore, the concept of superposition—where particles exist in multiple states simultaneously until observed—parallels the idea that languages and dialects embody multiple potential future states. LLMs risk ‘collapsing’ these possibilities into a narrower spectrum, thereby limiting the potential future evolution and adaptation of languages.

Entropy, a concept borrowed from thermodynamics, describes the degree of disorder within a system and provides a lens to examine the complexity and unpredictability inherent in language. High linguistic entropy signifies a rich, varied linguistic landscape. LLMs, by optimizing for predictability and commonality, may reduce this entropy, leading to a more ordered but less diverse linguistic environment.

Similarly, Zipf’s Law, which observes that the frequency of a word is inversely proportional to its rank in frequency tables, highlights a natural balance in language use—a balance that might be disrupted by LLMs favoring more common terms and structures, potentially flattening the Zipfian distribution that characterizes human language.

The Sapir-Whorf hypothesis posits that language shapes thought, suggesting that as LLMs influence language, they could also reshape our cognitive frameworks. The risk here extends beyond natural language, impacting mathematical and computational languages as well. Mathematical language, with its precision and symbolic richness, enables diverse and complex thought processes. If LLMs lead to an oversimplification or standardization of mathematical language, they might constrain the expressive power essential for advanced scientific inquiry and innovation.

In light of these insights, our contemplation of LLMs’ Semantic Debt converges on a pivotal realization: as we navigate an increasingly complex world, the indispensable value of original, deep thought—rooted in the untouched, authentic distributions of “true” language—becomes ever more apparent. The nuanced interplay of language and cognition, underpinned by the raw, undistilled essence of linguistic diversity, holds the key to unlocking innovative solutions and perspectives essential for addressing the multifaceted challenges our species faces.

The potential risk we confront is not merely linguistic or technological but existential in scope. Should we continue to erode the rich tapestry of our linguistic heritage through the unbridled application of LLMs, guided solely by efficiency and uniformity, we may reach a juncture where the homogenized language, shaped by the constraints of Kullback-Leibler Divergence and other measures, no longer suffices to express or conceive the original, profound insights humanity desperately needs. The “true” distribution of language, with its idiosyncratic syntaxes, its diverse lexicons, and its capacity to convey the depth of human experience, stands as an irreplaceable wellspring of cognitive diversity and creative potential.

In this critical moment, it is incumbent upon us to steward this linguistic legacy with foresight and care, ensuring that the tools we create serve not only to amplify our communicative prowess but also to preserve the cognitive diversity that is a hallmark of our species. As we harness the powers of LLMs, let us do so with a keen awareness of the semantic debt we accrue, committing to strategies that sustain and celebrate the full spectrum of human language. In this commitment lies our hope for a future where technology amplifies not just our efficiency but our humanity, empowering us to confront the unknown with the full force of our collective ingenuity and originality.

——————————

Further Reading

– Crystal, D. (2000). *Language Death*. Cambridge University Press. This book discusses the implications of language extinction and the importance of linguistic diversity.

– Greenberg, J. H. (1963). *Universals of Language*. MIT Press. An exploration of linguistic universals, offering insights into the commonalities and diversities of human languages.

– Darwin, C. (1859). *On the Origin of Species*. John Murray. Charles Darwin’s seminal work laying the foundation of evolutionary biology.

– Ridley, M. (2004). *Evolution*. Blackwell Publishing. A comprehensive overview of evolutionary theory and its applications in modern science.

– Griffiths, D. J. (2005). *Introduction to Quantum Mechanics*. Pearson Prentice Hall. A foundational text that provides a clear introduction to the principles of quantum mechanics.

– Zurek, W. H. (1991). “Decoherence and the Transition from Quantum to Classical.” *Physics Today*, 44(10), 36-44. An article that explores the intersection of quantum mechanics with classical physics.

– Stewart, I. (1995). *Concepts of Modern Mathematics*. Dover Publications. This book offers insights into the development and application of modern mathematical concepts.

– Courant, R., & Robbins, H. (1996). *What is Mathematics? An Elementary Approach to Ideas and Methods*. Oxford University Press. A classic text that provides an accessible exploration of fundamental mathematical ideas.

– Jurafsky, D., & Martin, J. H. (2019). *Speech and Language Processing*. Pearson. A comprehensive textbook on natural language processing and computational linguistics.

– Manning, C. D., & Schütze, H. (1999). *Foundations of Statistical Natural Language Processing*. MIT Press. An influential text on statistical methods in natural language processing.

– Thagard, P. (2005). *Mind: Introduction to Cognitive Science*. MIT Press. An introductory text that explores the interdisciplinary field of cognitive science.

– Bechtel, W., & Abrahamsen, A. (2002). *Connectionism and the Mind: Parallel Processing, Dynamics, and Evolution in Networks*. Blackwell Publishing. A study of connectionist models in cognitive science.

– Floridi, L. (2013). *The Ethics of Information*. Oxford University Press. A philosophical exploration of the ethical issues surrounding information and communication technologies.

– Brey, P. (2010). *Philosophy of Technology after the Empirical Turn*. Springer. A collection of essays on the philosophy of technology in the context of empirical research.

– Devitt, M., & Hanley, R. (2006). *The Blackwell Guide to the Philosophy of Language*. Wiley-Blackwell. A comprehensive guide to the major themes and debates in the philosophy of language.

– Searle, J. R. (1969). *Speech Acts: An Essay in the Philosophy of Language*. Cambridge University Press. A seminal work on the philosophy of language and communication.

– Duranti, A. (1997). *Linguistic Anthropology*. Cambridge University Press. An introduction to the study of language in its social and cultural context.

– Silverstein, M., & Urban, G. (1996). *Natural Histories of Discourse*. University of Chicago Press. A collection of essays on the relationship between language, culture, and society.

– Boyd, R., & Richerson, P. J. (2005). *The Origin and Evolution of Cultures*. Oxford University Press. A seminal work on the role of cultural evolution in shaping human societies.

– Henrich, J. (2015). *The Secret of Our Success: How Culture Is Driving Human Evolution, Domesticating Our Species, and Making Us Smarter*. Princeton University Press. An exploration of the evolutionary significance of culture in human development

– Zipf, G. K. (1949). *Human Behavior and the Principle of Least Effort*. Addison-Wesley. This book introduces Zipf’s law, which suggests that in any natural language, the frequency of any word is inversely proportional to its rank in the frequency table.

– Whorf, B. L. (1956). *Language, Thought, and Reality: Selected Writings of Benjamin Lee Whorf*. MIT Press. This collection highlights Whorf’s principle of linguistic relativity, proposing that the structure of a language affects its speakers’ world view and cognition.

– Montemurro, M. A. (2001). “Beyond the Zipf–Mandelbrot law in quantitative linguistics.” *Physica A: Statistical Mechanics and its Applications*, 300(3-4), 567-578. This study extends the application of Zipf’s law in linguistics, touching upon its relevance in statistical mechanics.

– Wolff, P. & Holmes, K. J. (2011). “Linguistic relativity.” *Wiley Interdisciplinary Reviews: Cognitive Science*, 2(3), 253-265. An overview of how linguistic relativity plays a crucial role in cognitive science, linking language to thought processes.